Overview

Most API design documents are written after the API has been built, as backfilled justification for decisions nobody remembers making. This document is the opposite: it's the RFC that defined the contract before a single endpoint was implemented. Every method choice, every response shape, every error code was decided on paper first, because the moment three teams start implementing against an ambiguous spec, the API that ships is nobody's.

The engagement

OpenAPI 3.0 contract design for a neobank's transactional layer — list, detail, annotate, and delete operations on a user's monthly transactions. A specification document, not an implementation.

The continuity

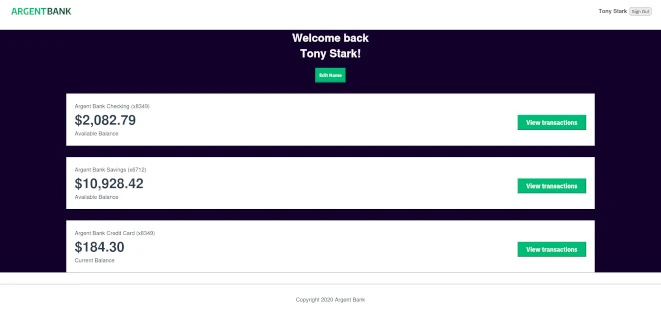

This is Phase 2 of the Argent Bank engagement. Phase 1 shipped the front-end authentication layer against the existing auth API. Phase 2 designs the transactional API that the front-end will consume next, before the back-end team writes a single route.

The design premise

An API contract is a coordination device between three teams — front-end, back-end, QA — each of whom will interpret ambiguity differently. The goal is to make ambiguity impossible, not to make the spec beautiful.

The deliverable

A complete OpenAPI 3.0 YAML specification, importable into Prism for mocking, runnable against Schemathesis for conformance testing, and readable by a non-API-specialist as prose. Documented decisions for every non-obvious choice.

Brief disclosure, consistent with other reference builds in this collection: Argent Bank is a recognizable training brief. This case study is the second of two on the same project — the first covered the front-end security posture, this one covers the API contract. Together they demonstrate that the same engineer who ships the front-end should be able to design the contract the front-end consumes. Most teams split those roles and inherit the coordination problems that come with the split.

The four endpoints, and the decisions behind each

An API is not its route list. An API is the set of decisions that produced its route list. Here are the four endpoints we designed and the reasoning for each non-obvious choice — the kind of decision that most API designs make implicitly and regret explicitly.

1. GET /accounts/{accountId}/transactions — listing monthly operations

The decision: cursor pagination, not offset pagination.

Offset pagination (?page=3&limit=50) is easier to implement and almost universally wrong. It breaks under any form of concurrent write — every new transaction shifts the offsets of everything below it, causing duplicates and omissions in paginated results. For a banking API where new transactions arrive continuously, offset pagination is a data integrity bug dressed as a feature.

We specified cursor pagination based on an opaque token that encodes the last transaction's sorted position. The front-end treats it as an opaque string and passes it back via ?cursor=.... The back-end can change the cursor format without breaking clients.

The decision: filter params as query strings, not a POST search endpoint.

Some API designers reach for POST /transactions/search when filtering gets complex, because query strings feel inelegant for multi-field filters. This is a trap. Filters belong in query strings because GET requests are cacheable, idempotent, and safe to retry. A POST search endpoint loses all three properties for no real gain. Specified: ?from=2025-10-01&to=2025-10-31&minAmount=-500&category=groceries, with a documented ceiling on filter complexity that triggers a 400 Bad Request when exceeded.

2. GET /accounts/{accountId}/transactions/{transactionId} — detail view

The decision: no embedded nested resources.

The detail endpoint returns the transaction and its core fields. It does not return the merchant entity, the category object, or the associated card metadata as embedded objects — it returns their IDs and the client fetches them separately if needed.

This rejects the HATEOAS school of API design, which embeds everything and ships 200KB responses for 2KB of data. Specified flat responses with explicit references; cached separately by the client; smaller wire size; no over-fetching tax on the 95% of requests that don't need the embedded data.

The decision: ETag header on every response.

Every detail response includes a strong ETag header derived from the transaction's version. This enables the concurrency model for the next endpoint — and gives the front-end a free conditional request optimization via If-None-Match on subsequent reads.

3. PATCH /accounts/{accountId}/transactions/{transactionId} — annotate

The decision: PATCH with application/merge-patch+json, not PUT.

PUT is replacement semantics: you must send the entire resource or fields disappear. PATCH is partial update semantics: you send only the fields you want to change. For transaction annotation — where the client is only modifying note and category and has no business touching amount or timestamp — PATCH is the only responsible choice. Specified the merge-patch RFC 7396 format to avoid the ambiguity of vanilla JSON PATCH.

The decision: If-Match header required, not optional.

The endpoint rejects requests that don't include an If-Match: "<etag>" header with a 428 Precondition Required. This prevents the classic race condition where two clients read the same transaction, both modify the note, and the second write silently overwrites the first. The front-end gets the ETag from the previous GET and passes it back. Lost updates become impossible by design.

The decision: editable fields explicitly enumerated in the schema.

The PATCH request schema lists exactly two editable fields: note (string, max 500 chars) and category (enum of allowed values). Any other field in the payload triggers a 400 Bad Request with a specific error code. This is defensive API design: the endpoint refuses to pretend it can do something it can't.

4. DELETE /accounts/{accountId}/transactions/{transactionId}/annotations — remove metadata

The decision: delete the annotation, not the transaction.

The DELETE verb is dangerous if misapplied. Transactions are financial records and cannot be deleted by a user — ever. What a user can delete is the annotation metadata they added themselves. So the endpoint targets the annotation sub-resource explicitly: .../transactions/{id}/annotations, not .../transactions/{id}.

Making this distinction in the URL itself (rather than relying on a query param or a field in the body) means that a misconfigured client cannot accidentally delete a transaction even if it wanted to. The route literally doesn't exist. Defense in depth starts at the URL layer.

The decision: idempotent delete.

Deleting an annotation that's already gone returns 204 No Content, not 404 Not Found. This is the correct HTTP semantics for DELETE and — more importantly — it means retry logic on the client is safe. If the network drops mid-response, the client can retry the DELETE without worrying about error handling. Idempotency is not a nice-to-have on a mobile API where the network is unreliable; it's a correctness requirement.

Breaking changes we're explicitly forbidding

API contracts are forever. The features you add to v1 are the features you cannot remove from v2 without breaking every consumer who built against the original. Serious API design lists the changes that are off the table forever — the constraints future engineers must accept as inherited.

- The cursor format will never become client-parseable. The cursor string is opaque by contract. Consumers that try to decode it lose their compatibility guarantee the moment we change it. This is documented in the spec and the mock server emits random-looking base64 to discourage reverse engineering.

- The transaction ID format will never change. It's a UUID v7 today. It will still be a UUID v7 in five years, even if the team migrates to something else internally. A publicly exposed ID is a permanent decision.

- No field will ever be removed from a response. Only new fields will be added. Clients that break on unknown fields are broken clients, but clients that relied on a field's existence are correct clients.

- No required request field will ever be added. Any new request field must have a default value or be optional. Breaking the request shape means breaking every client on the day of the change.

- Error codes are append-only. New error conditions get new codes. Existing error codes keep their meaning forever, even if the internal implementation shifts.

These aren't restrictions imposed on the future. They're the contract the spec makes with every engineer who will inherit it.

Why we rejected three common API design choices

The HTTP method choices above are half the story. The other half is the three patterns we considered and rejected — each of which would have shipped faster and been wrong.

- GraphQL. Superficially tempting for a transaction query with variable shape needs. Rejected because GraphQL's real cost isn't learning it — it's the operational burden (query cost analysis, depth limiting, persisted queries) that a three-endpoint API never justifies. REST is boring, widely understood, and correct here.

- Auto-generated OpenAPI from code. Some teams write the back-end first and generate the spec from decorators. This is backwards. When the code is the source of truth, the spec becomes documentation of whatever the code happens to do, which is not the same thing as the contract the code should have implemented. The spec leads, always.

- A dedicated SDK client. The front-end team asked about a TypeScript SDK generated from the OpenAPI spec. Rejected for this phase because auto-generated SDKs tend to leak implementation details and force consumer upgrades on every schema change. A small hand-written client over

fetchis easier to maintain, easier to debug, and doesn't become a second API surface to version.

Tech stack

- OpenAPI 3.0 / YAML (the specification itself)

- Swagger Editor (authoring)

- Spectral (linting the spec against style rules)

- Prism (mock server, enables front-end development before back-end exists)

- Schemathesis (property-based conformance testing, catches edge cases hand-written tests miss)

- Dredd (example-based conformance testing as a fallback layer)

- The existing React + Redux Toolkit front-end from Phase 1 as the first consumer

What we'd do differently with a real client

The honesty section we run on every case study. For a paying neobank client with a production perimeter, here's what would change about this design work.

- Customer research before route design. The four endpoints match the brief perfectly. The brief itself wasn't validated against actual restaurateur banking workflows. A real engagement would start with five interviews and probably discover that the "annotation" endpoint is the wrong abstraction.

- A real versioning strategy document, not just a "no breaking changes" rule. The rule works for six months. After that, you need a deprecation policy, a versioning header, and a plan for when v2 happens. This spec documents none of that because the engagement didn't call for it.

- Rate limiting and quota documentation. The spec says nothing about how many requests per minute a client can make. In a real API, rate limits are part of the contract and need to be specified: per-endpoint, per-user, per-IP, with documented backoff headers (

Retry-After,X-RateLimit-*). - Webhook specification for push events. A real transactions API in 2026 doesn't work on polling. Clients need to be notified of new transactions via webhooks or server-sent events. Designing the push surface is at least as much work as designing the pull surface, and it's not in this spec.

- Audit log endpoints. Banking regulators require audit trails on every data access. A real engagement specifies the audit log endpoints alongside the business endpoints, with immutability guarantees. This spec doesn't.

- OpenAPI extensions for security classification.

x-security-sensitivity: financialtags on schemas let downstream tooling (SAST scanners, data lineage trackers) treat the fields appropriately. A production engagement would include these extensions from the first commit.

What this engagement reveals

API design is the most consequential engineering decision in any multi-team product, and it's the decision most often made by whoever happens to be coding the endpoint at 4pm on a Thursday. Serious API design is spec-first, contract-driven, and pessimistic about consumer behavior — and it produces APIs that survive three years of consumer growth without emergency rewrites. The discipline is in the writing, not the typing. We think this way because we've seen what happens when nobody does.

If you're designing an API that more than one team will consume — especially if one of those teams is external to your company — this is the engagement we're built for. The first call is a 30-minute working session where we look at your current contract (or lack of one), your consumer mix, and your versioning constraints, and tell you honestly whether the API you're about to ship will hold up at 10x the current traffic. If the answer is "you need an API architect, not a studio," we'll say so in the call. Straight answers only.

This case study is Phase 2 of a two-phase engagement. The Phase 1 case study covers the front-end security design doc — how we built the authentication layer that will consume this API.